Oil and the Digital Voice

My contribution to History and Technology’s special issue on “Oil Spillovers” — “The Oleaginous Voice: Auto-Tune, Linear Predictive Coding, and the Security-Petroleum Complex” is now available via open access!

My contribution to History and Technology’s special issue on “Oil Spillovers” — “The Oleaginous Voice: Auto-Tune, Linear Predictive Coding, and the Security-Petroleum Complex” is now available via open access!

Some documentation of an audio-visual installation on “crypto-minding” I contributed to:

A century of manic industrialization has left us with ample cheap energy, real estate, and infrastructure around the world. A growing wave of “crypto-entrepreneurs” have been converting decommissioned industrial spaces into “crypto mining” facilities, burning carbon-based fuels to solve endless algorithms and create dubious value. This showcase highlights various computational techniques to engage with and address community concerns surrounding the externalized impacts of the Greenidge Bitcoin Mining power plant on Seneca Lake in New York state. Through specific interventions into questions regarding public sentiment, counter-surveillance, and thermal water pollution. This is about exploring not only the production of public knowledge but also how to co-create computer-assisted repertoires of contention for radical social organizing and political communication.

I have a new article out in the latest issue of Science for the People. Check it out here

New York’s Department of Environmental Conservation (DEC) has repeatedly delayed its permitting decision regarding a controversial Bitcoin mining power plant on Seneca Lake, citing in part the need to review the thousands of public comments it has received on the issue. I wanted to find out what sorts of sentiments were expressed in the public comments, so I filed a Freedom of Information (FOIL) request with the DEC. They sent me a thumb drive with 3,919 files on it (mostly PDFs, some word documents). Even my relatively new iMac desktop had trouble even scrolling through all of the files.

Fortunately, Cornell Information Science PhD student Marina Zafiris was able to use text analysis tools to make the dataset more tractable. Most of the file names contained phrases which expressed the sentiment expressed by the commenter (“Deny Greenidge’s Title V Air Permit”, for example). Many of the documents could also be grouped together as having originated from the same organizing campaign, and could be sorted through quickly for that reason. I should say that even in the cases where similar language was used, many of the comments contained additional details about the commenter’s specific concerns and stakes in the issue.

Through a combination of text analysis and going through each file manually, we were able to sort out the files into three categories: in favor of permit renewal for the plant, opposed to permit renewal, or not clearly categorizable (this last category included comments requesting more information, for example). Here are the findings:

As the above pie chart shows, we found that 98.8% of the files expressed opposition, 0.97% were in favor of permit renewal, and 0.23% could not be categorized. Marina was further able to map many of the comments based on the location information they provided. The results, screen-captured below, are available as an interactive map here

Our work has received press coverage from Rosemary Misdary at WNYC and the Gothamist, Peter Mantius at Waterfront Online, and Cody Taylor at WENY news. Our research was performed in affiliation with the Repair & Redress project at Cornell’s Dept. of Info Sci.

The cover of Orson Scott Card’s “Folk of the Fringe”, a short story collection envisioning a post-apocalyptic Utah.

I’m excited to have a piece in the latest issue of Pulse, an open-access journal of science, technology, and culture. Here is the abstract:

FROM PROVING GROUND TO BUMBLEHIVE: Touring Utah’s Weird Information Landscape

The American state of Utah has emerged as an important infrastructural and experimental hub for large-scale information science and communication technology endeavors: both Meta (formerly Facebook) and the US intelligence community maintain massive data centers just south of Salt Lake City, each of which require over a million gallons of water per day to cool servers housing billions of gigabytes of personal data. Utah-based research services such as FamilySearch and Ancestry.com, both with roots in the Church of Jesus Christ of Latter-Day Saints (also known as the Mormons) play hugely important roles in the growing online genealogical and genetic research industry, which continues to re-shape how kinship relations are conceptualized and evaluated globally. Many of the state’s municipal agencies were recently forced to cancel contracts with the AI-based software company Banjo (since renamed SafeXai), which promised to consolidate diverse information streams into a “live-time” data surveillance service, following revelations the firm’s CEO was a former member of the Ku Klux Klan. This article reads these unfolding projects through John Durham Peters’ concepts of Mormon “media theology” and “celestial bookkeeping,” as well as the fiction of Utah-rooted authors W. H. Pugmire and Orson Scott Card. It sketches a tour of Utah as a weird information landscape, wherein unfathomable quantities of data find material embodiment and the secular-rational promises and practices of Big Data reveal latent cosmic aspects.

I have a chapter on sound art and STS in the recently-published Routledge Handbook of Art, Science, and Technology Studies! If you don’t have $250 to spare, I’d recommend asking your local/university library to purchase a copy :) Much thanks to the brilliant Annea Lockwood who provided the image and valuable feedback on the essay! Here’s the first page:

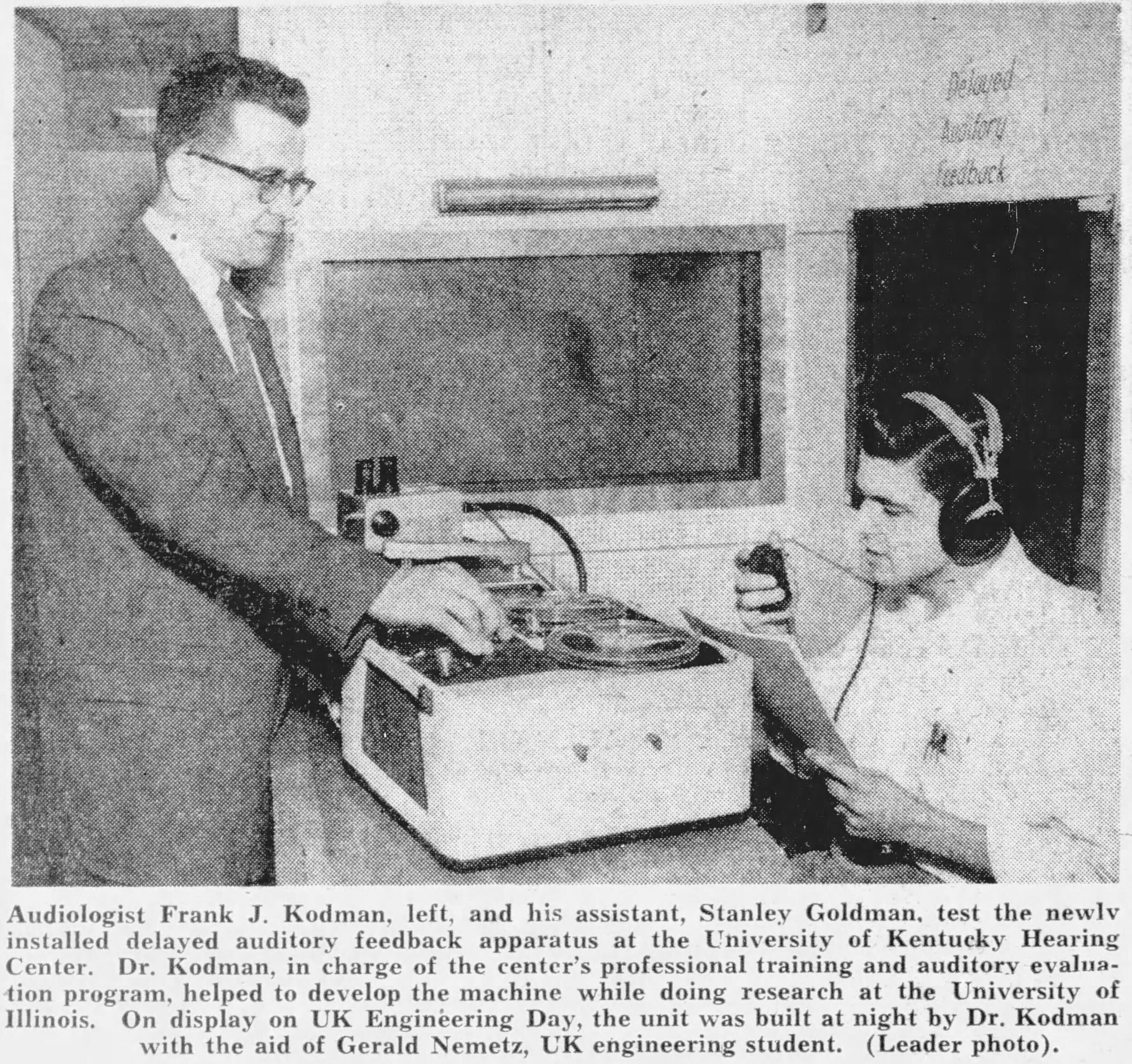

I’m presenting my Delayed Auditory Feedback article at the next Cornell Media Studies Colloquium. It will take place October 22nd at noon Eastern Time in the Media Room, 311 Uris Library. Planning on having a Zoom stream as well, TBA. Details HERE!

My article on delayed auditory feedback is now out in the Journal Technology and Culture!

The entire issue is excellent and full of sound/media related historiography, such as Amanda Beardsley’s piece on the gender politics of architectural acoustics in the Mormon church and Jan Hua-Henning on fire alarm telegraphy in imperial Germany.

Photo, taken from page 22 of the Lexington Leader newspaper from Lexington, Kentucky, June 21, 1955.

My article on the forgotten sonic dimension of insect feeding research is now available online at Science, Technology, and Human Values!

I was fortunate to get to contribute some sound design to the online production of re:CLICK being put on next week by UC Davis and Northwestern. You can RSVP at: https://re-click.ucdavis.edu

I’m excited to be part of Cornell Media Studies’ “Media Objects” conference this fall. I’m presenting at the first session, on “Materiality/Immateriality” at 3:30pm NY time October 23rd. There are some initial think pieces available here: mediastudies.as.cornell.edu/media-objects-conference

I’ll be discussing the Electrical Penetration Graph (EPG), which I’ve nicknamed the “aphid synthesizer”.

I just came across a wonderful short essay from 1992 (translated 2001) by Paolo Virno, called The Two Masks of Materialism.

Virno suggests that materialists are fundamentally interested in the unity of life and philosophy. To that end, they don two kinds of “character masks”:

the “sociologist of knowledge” tells you about theory’s non-theoretical origins

the “sensationist” recovers the sensible basis of abstract thought, its specific pleasures and pains.

He argues that, in our present “mature capitalism”, the all-pervasive role of technological mediations as materialized theories means that sensation is no longer the starting point, but rather then end-point. Generalizing Bachelard’s claim about laboratory science “sensual learning is no longer a point of departure, it is not even a mere guide: it is an end”.

This is good news for the materialist, though it requires the masks to be worn in a slightly different way:

The sociologist of knowledge can no longer “go about looking for ‘vital’ residues in one theory or another, but must instead identify and describe a specific form of life on the basis of the type of knowledge which permeates it.” This method, which begins with the experience of work, is how to get at the sociological and material aspects of contemporary existence by considering pure theory as material fact.

(To paraphrase, the routine becomes: tell me what you think, I’ll tell you how you work, what relations you entertain, what your interests and least-reflected feelings and impulses are.)

For the sensationist, the recent inversion of the theory/sensation sequence solves the problem that persists whenever it is taken for granted that sense-data comes before theory (a situation wherein a materialist insistence on including pleasure and pain inevitably comes off as “quarrelsome and impotent”). If sensual perception now always comes after theory, then pleasures and pains appear as culminating accomplishments. Furthermore, because they are experienced as pleasures and pains rather than disinterested/disembodied knowledge, they become preludes to politics.

I’m excited to announce a piece on sound art in the forthcoming Routledge Handbook of Art, Science & Technology Studies. Here is the abstract:

Infrastructural Inversions in Sound Art and STS

This chapter puts the fields of sound art and science and technology studies (STS) into methodological and theoretical conversation. Specifically, it argues that the gesture of “infrastructural inversion” developed by STS scholars Geoffrey Bowker and Susan Leigh Star describes a common topic and tactic across the worlds of STS and sound art, as demonstrated through an analysis of works by composers Annea Lockwood and Alvin Lucier. This shared critical sensibility can be traced in part to the intertwined historical roots of STS and sound art’s respective topics, i.e. experimental science and music understood as a sound-based art form, as distinguished from the musica universalis of the classical liberal arts curriculum. Contemporary use of non-speech sound to convey scientific findings, known as “data sonification”, thus represents a continuation of this relationship between science and sound art, albeit in an inverted and occasionally absurd way. While work at the intersection of STS and the interdisciplinary field of Sound Studies has explored this relationship empirically, the combination of STS and sound art practices in a “making and doing” context remains promising but unexplored.

Twenty-eight TED talks played simultaneously for four and a half minutes

Here’s sonification I made based on data from this paper from Matthias Hess’ Animal Science lab at UC Davis.

Very exciting multi-day event in celebration of Cornell’s Moog history, featuring Suzanne Ciani among others! Details here